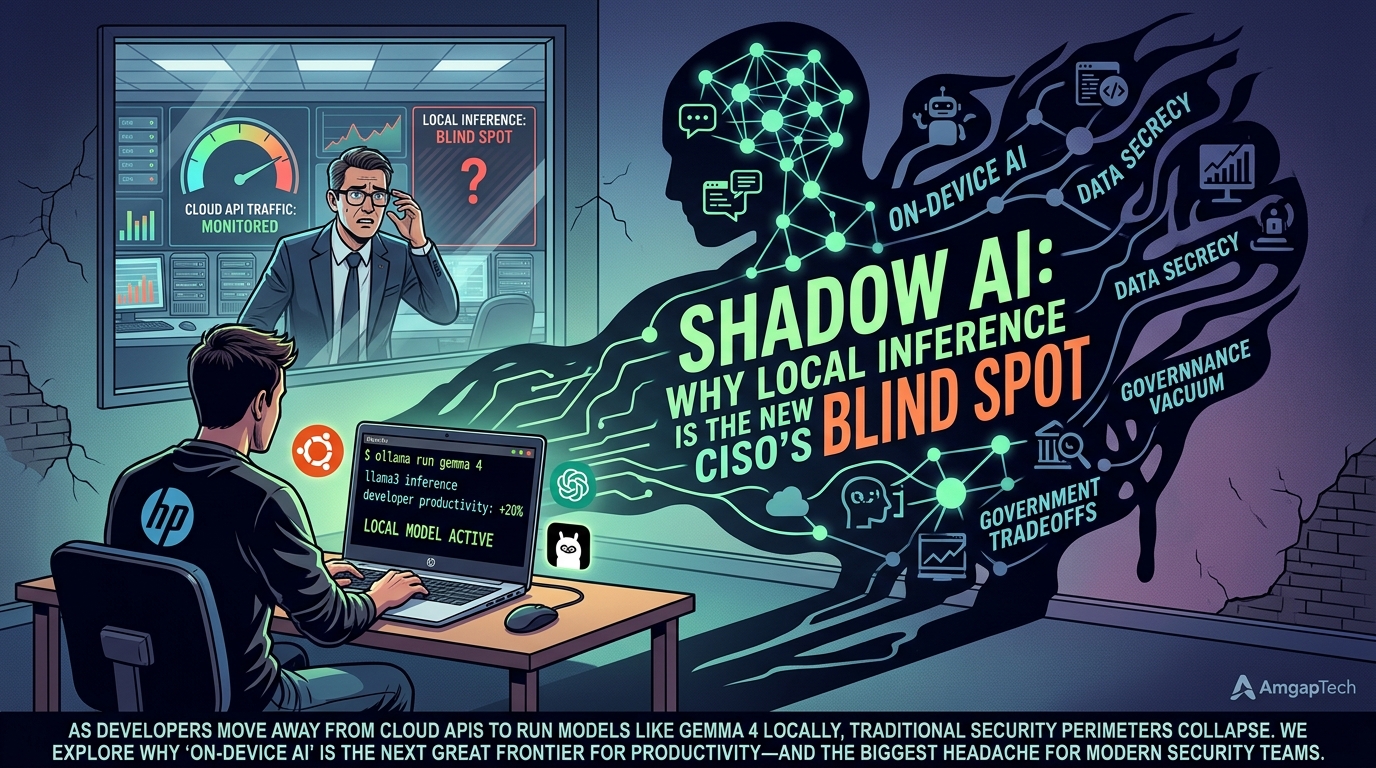

Shadow AI: Why Local Inference is the New CISO's Blind Spot

The Great Migration Inward For the last two years, security teams have focused on "Data Out": preventing employees from pasting sensitive company secrets into a ChatGPT window. They built firewalls, set up data loss prevention (DLP) triggers, and monitored API traffic.

But while the CISOs were watching the gates, the developers moved the engine inside.

With the rise of high-performance local models like Gemma 4 and tools like Ollama, developers are increasingly running inference on their own hardware. It's faster, it works offline, and it costs zero in API fees. But this "Shadow AI" creates a massive blind spot: how do you secure data that never leaves a developer's laptop?

The Productivity Loophole Developers are inherently efficient—if a tool makes them 20% faster, they will use it. Local inference is the ultimate productivity hack. It removes the latency of the cloud and allows for deep integration with local files without the "privacy guilt" of sending them to a third-party server.

However, as VentureBeat recently pointed out, this creates a governance vacuum. When a developer runs a model on their device, the enterprise has no visibility into:

What data is being processed?

Is the model itself compromised or "poisoned"?

Are the outputs being used to generate insecure code that bypasses internal linting?

Gemma 4 and the Rise of "Edge Intelligence" The release of Google's Gemma 4 has accelerated this trend. Designed specifically for "Edge AI," these models are small enough to run on a standard dev machine (like an HP Laptop 15 running Ubuntu) but powerful enough to handle complex reasoning.

At AmgapTech, we see the power of this every day. We can pull a model, run a private instance, and build entire features without a single outbound request. But for an enterprise, this means their "security perimeter" has effectively moved from the cloud firewall to the individual developer's terminal. If the developer's local environment is compromised, the "Shadow AI" becomes a silent partner in the breach.

The Vocabulary Gap: Why Definition Matters As TechCrunch highlighted in their recent AI glossary, we are dealing with a "terminology crisis." Concepts like Hallucinations and RAG are well-understood in the dev lounge, but they are terrifying to a compliance officer.

The "Shadow AI" risk isn't just about data leaking out; it's about incorrect, unvetted information leaking in. If a local model hallucinates a security protocol or suggests a deprecated, vulnerable library, and that code is committed without the usual oversight that cloud-based AI filters might provide, you've introduced a silent vulnerability into your production codebase.

The Hard Truth: You Can't Ban Local AI Here is the technical trade-off: You can't block what you can't see. CISOs who try to ban local LLMs will simply drive their best developers underground. The "Hard Truth" is that the cat is out of the bag. You cannot stop a developer from running a binary on their own machine.

The solution isn't "blocking"; it's orchestration. Companies need to provide secure, local environments and standardized model "weights" that have been vetted for safety. We need to move from "Shadow AI" to "Managed Local AI."

Conclusion: The New Perimeter is the Machine The shift to on-device inference is inevitable. It’s the natural evolution of the "developer as an architect" model we’ve been discussing. But with that power comes a new kind of responsibility.

At AmgapTech, we believe that the most secure codebase is one where the AI tools are integrated, transparent, and locally governed. We don't hide our local models; we audit them.

Is your team running AI in the shadows, or are you building the light?

Sources

Stay updated

Get our latest technical articles and product updates delivered to your inbox.